Using MatCal to Perform Mathematical and Logical Calculations in Modern Requirements Management

What is MatCal?

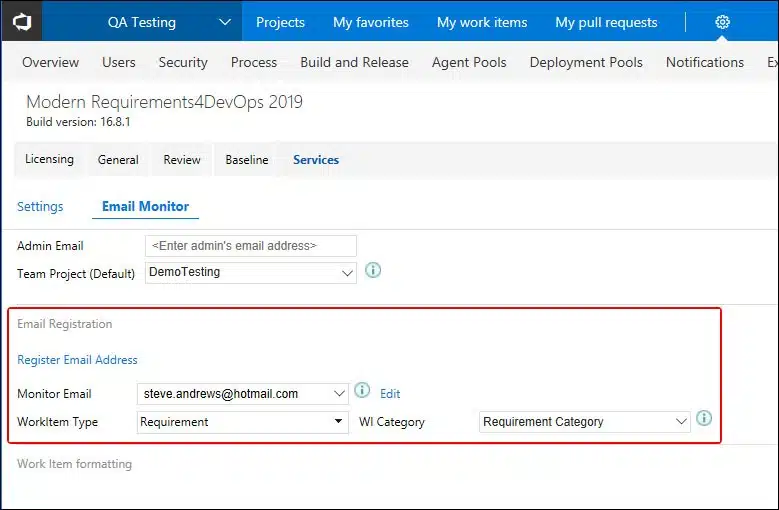

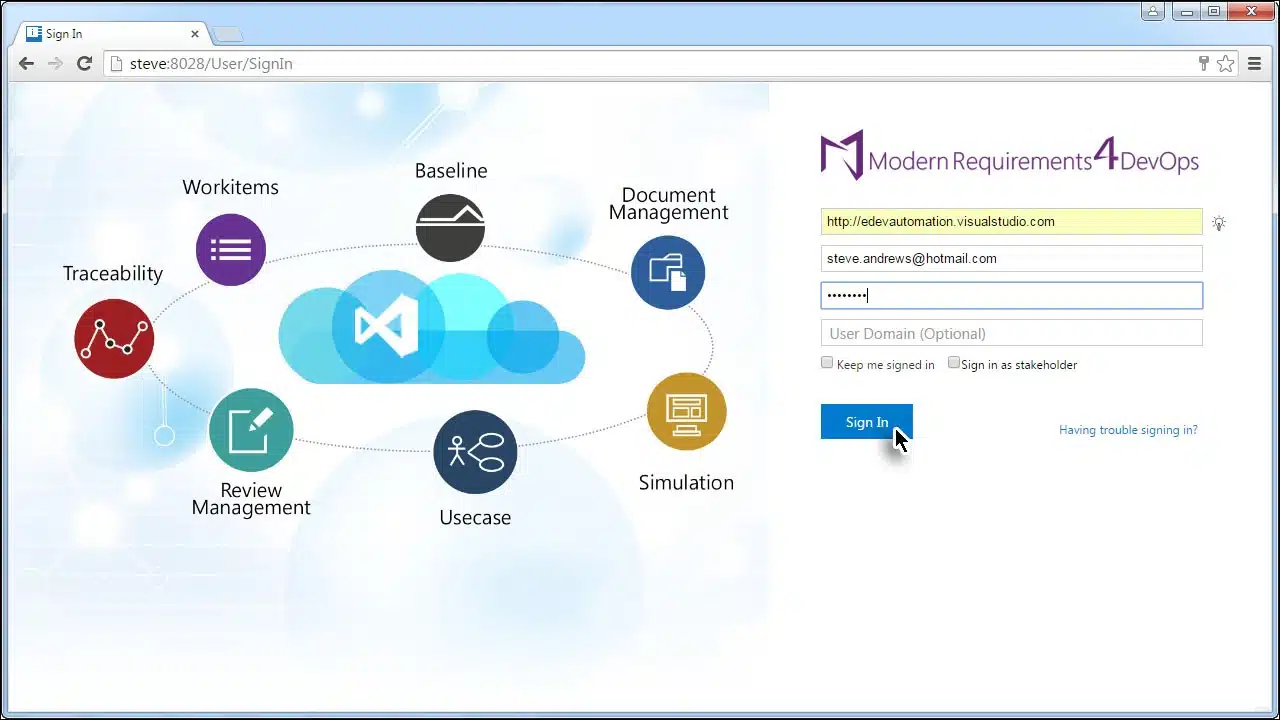

MatCal is a feature in Modern Requirement4DevOps used to perform mathematical and logical expressions on work items.

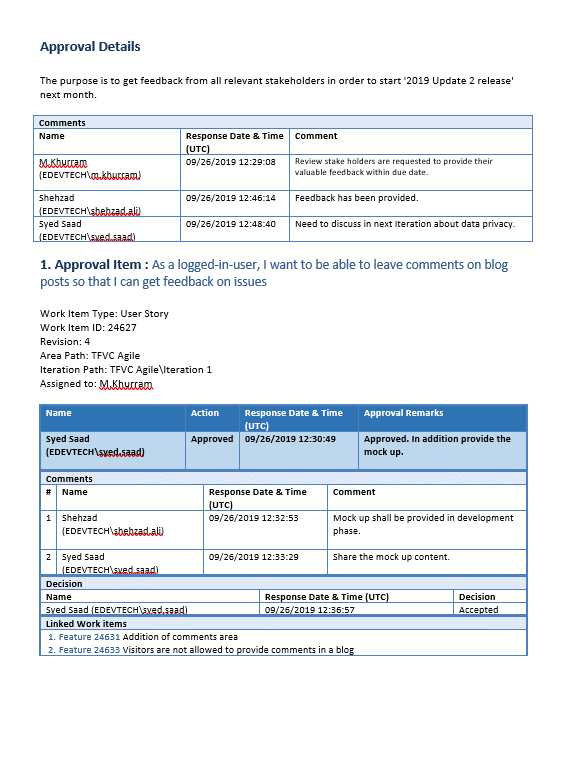

Why we need MatCal in Requirements Management

To manage the relationships between work item properties in a smarter way! It eliminates the manual efforts of doing the calculation outside the project environment and avoids risks of introducing incorrect calculation results to your projects.

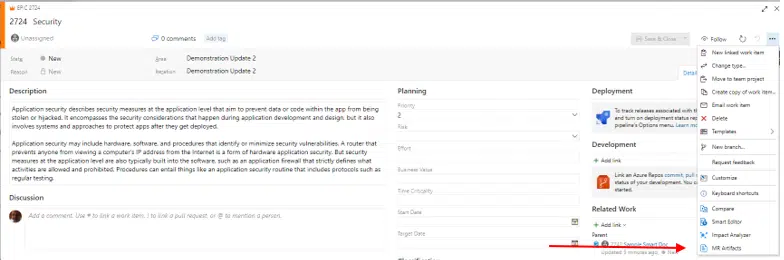

Let’s look at a simple example here to illustrate a relationship between work item properties.

Business Value and Priority are properties of work item Feature. Normally, high Business Value leads to high Priority.

With the right configuration, MatCal could help you manage the relationship by automatically assigning Priority value based on the Business Value input.

Industry Use Scenarios

Scenario 1: Automotive Safety Integrity Level (ASIL) in ISO 26262

Scenario 2: Risk rating is automatically assigned according to Severity score and Occurrence score

Scenario 3: Priority rating is automatically assigned according to Severity score and Likelihood score